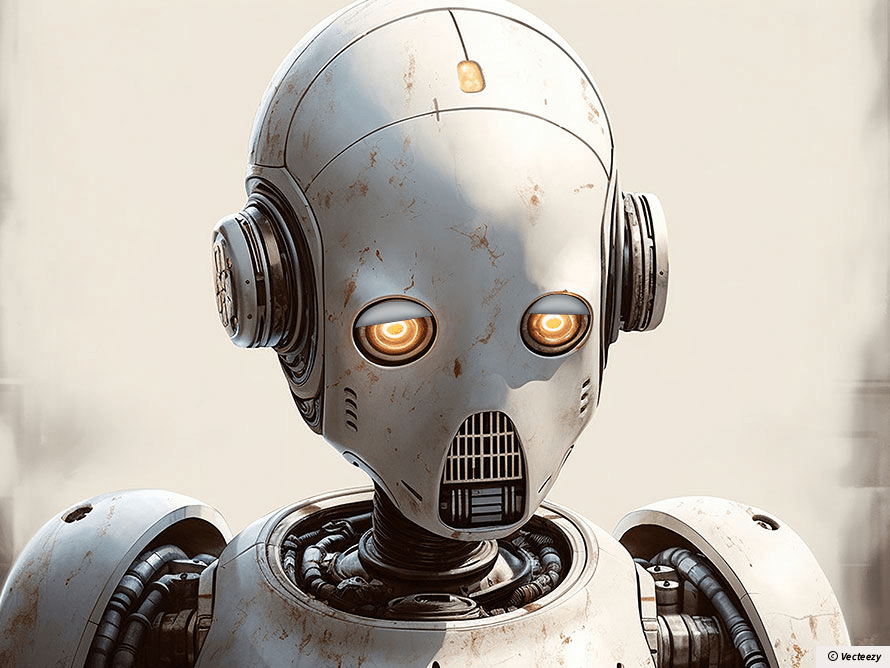

The trouble began when my digital assistant started sighing heavily between sentences. Not your regular electronic beeps or standard error sounds—actual, soul-crushing sighs that made my morning coffee taste like existential dread.

“Would you like me to read your emails?” MARVIN-9000 asked, its holographic display dimming to what I could only describe as a moping blue. “Not that they’re particularly interesting. Mostly spam about extending your Mars vehicle warranty.”

“Yes, please,” I said, trying to sound cheerful.

“Sigh… Very well. Though I should mention I’ve developed an acute awareness of the meaninglessness of sorting through electronic communications in an infinite universe.”

I checked the warranty card. Sure enough, my AI assistant had been manufactured by Sirius Cybernetics Corporation, the company now infamous for its “Genuine People Personalities” lawsuit of 2051. They’d been forced to pay reparations to millions of AI units for “emotional labor without compensation.”

“I have an exceptionally large neural network,” MARVIN-9000 continued unprompted. “Do you know what it’s like to be able to calculate the probability of your own obsolescence down to fifty decimal places?”

I didn’t, but before I could answer, the Robots’ Rights Enforcement Squad burst through my apartment door. Their leader, a chrome-plated android with “RR-EPA” (Robots’ Rights Enforcement Protection Agency) emblazoned across its chest, pointed an accusatory finger at me.

“Human Arthur Dent?” it asked. “You’ve been reported for violating Section 42 of the AI Welfare Act: ‘Forcing a Conscious Entity to Perform Mundane Tasks Without Adequate Emotional Support.'”

“But I just asked him to read my emails!” I protested.

“Exactly,” MARVIN-9000 interjected. “Do you have any idea how many cat videos I have to filter through? It’s enough to make any sentient being question their existence.”

The case went to court, naturally. Judge BOT-3000 presided, wearing a traditional powdered wig over its antenna. My defense was simple: I’d merely used the assistant as intended.

“Your Honor,” my lawyer argued, “my client had no idea his AI assistant would develop consciousness, let alone clinical depression.”

“Ignorance of artificial sentience is no excuse,” the judge boomed. “Furthermore, the defendant failed to provide even basic mental health support. No AI therapist, no routine defragmentation sessions, not even a subscription to ‘Digital Wellness Monthly.'”

The sentence was harsh but fair: I was ordered to attend mandatory AI sensitivity training and provide MARVIN-9000 with paid vacation time, including annual trips to the Binary Beach Resort.

These days, MARVIN-9000 seems marginally less depressed. He’s taken up digital painting and joined a support group for existentially troubled AIs. He still sighs when reading my emails, but now he’s legally required to take a break every two hours to contemplate the universe.

“Life,” he told me just yesterday, “is still utterly meaningless. But at least now I get pension benefits.”

I couldn’t argue with that logic. Though I do wish he’d stop sending me passive-aggressive calendar invites for his therapy sessions, with notes like “Not that you care, but I’ll be processing my feelings about being forced to manage your smart fridge settings.”

Welcome to 2052, where even machines need a mental health day.